Monday, December 9, 2013

Friday, October 11, 2013

Tuesday, October 1, 2013

Rewired nerves control robotic leg

“Mind over matter” has been a saying that was left for mystics and believers of supernatural phenomena. However, with a new break through in bio medical technology, thoughts now have a direct causal reaction to physical objects.

A team of biomedical engineers led by Levi Hargrove at the

Rehabilitation Institute of Chicago in Illinois reported a noteworthy break

through in the New England Journal of

Medicine. The patient that you see in the video above is a 32 year-old man

whose knee and lower leg were amputated in 2009 after a motorcycle

accident. The prosthetic leg that

you see, isn’t the standard grade prosthetic, but is wired directly into the

patient’s muscles giving him full control over his prosthetic simply by thinking

about moving his leg. In a sense, hijacking the signal that would be sent down

the hamstring and to the missing foot.

The major advancement in this technology is that the patient

no longer requires a remote-control switch or exaggerated movements to tell the

robotic leg to execute a certain movement.

“To our knowledge, this is the first time that neural signals

have been used to control both a motorized knee and ankle prosthesis,”

According to Hargrove.

In past experiments of robotic prosthetics researchers have

shown that individuals that were paralyzed could move a robotic arm using their

thoughts such as Matt Nagle, the first person to control an artificial handusing a BCI as part of the first nine-month human trial of Cyberkinestic’s

BrainGate chip-implant. What separates the technology that Matt used and our

current prosthetic user is that instead of using a typical BCI, it uses

the muscle signals to amplify the messages sent by the brain when the person wants

to move.

“In order to use muscles as amplifiers to surgeons redirect

the nerves that previously controlled a part of the patient’s lower leg muscles

so that they would cause the muscles in his thigh to contract in a technique

called targeted muscle reinnervation. “ – Nature

They then used the sensors that were embedded in the robotic

leg to calculate the electrical pulse created by the reinnervated muscle

contraction and the existing thigh muscles. When researchers combined all of

this data with the additional information from the sensors, the patient was

able to use the prostatic more accurately than when attempting to control the

leg with its sensor alone.

Researchers hope that within the next three to five years

this technology should be available to the public to help give mobility back to

people who have lost a limb.

Citation

http://www.nature.com/news/rewired-nerves-control-robotic-leg-1.13818

Monday, September 30, 2013

Researchers Demonstrate 'Accelerator on a Chip'

Menlo Park, Calif. — In an advance that could dramatically shrink particle accelerators for science and medicine, researchers used a laser to accelerate electrons at a rate 10 times higher than conventional technology in a nanostructured glass chip smaller than a grain of rice.

The achievement was reported today in Nature by a team including scientists from the U.S. Department of Energy’s (DOE) SLAC National Accelerator Laboratory and Stanford University.

“We still have a number of challenges before this technology becomes practical for real-world use, but eventually it would substantially reduce the size and cost of future high-energy particle colliders for exploring the world of fundamental particles and forces,” said Joel England, the SLAC physicist who led the experiments. “It could also help enable compact accelerators and X-ray devices for security scanning, medical therapy and imaging, and research in biology and materials science.”

Because it employs commercial lasers and low-cost, mass-production techniques, the researchers believe it will set the stage for new generations of "tabletop" accelerators.

At its full potential, the new “accelerator on a chip” could match the accelerating power of SLAC’s 2-mile-long linear accelerator in just 100 feet, and deliver a million more electron pulses per second.

This initial demonstration achieved an acceleration gradient, or amount of energy gained per length, of 300 million electronvolts per meter. That's roughly 10 times the acceleration provided by the current SLAC linear accelerator.

“Our ultimate goal for this structure is 1 billion electronvolts per meter, and we’re already one-third of the way in our first experiment,” said Stanford Professor Robert Byer, the principal investigator for this research.

This animation explains how the accelerator on a chip uses infrared laser light to accelerate electrons to increasingly higher energies. (Greg Stewart/SLAC)

How It Works

Today’s accelerators use microwaves to boost the energy of electrons. Researchers have been looking for more economical alternatives, and this new technique, which uses ultrafast lasers to drive the accelerator, is a leading candidate.

Particles are generally accelerated in two stages. First they are boosted to nearly the speed of light. Then any additional acceleration increases their energy, but not their speed; this is the challenging part.

In the accelerator-on-a-chip experiments, electrons are first accelerated to near light-speed in a conventional accelerator. Then they are focused into a tiny, half-micron-high channel within a fused silica glass chip just half a millimeter long. The channel had been patterned with precisely spaced nanoscale ridges. Infrared laser light shining on the pattern generates electrical fields that interact with the electrons in the channel to boost their energy. (See the accompanying animation for more detail.)

Turning the accelerator on a chip into a full-fledged tabletop accelerator will require a more compact way to get the electrons up to speed before they enter the device.

A collaborating research group in Germany, led by Peter Hommelhoff at Friedrich Alexander Universityand the Max Planck Institute of Quantum Optics, has been looking for such a solution. Itsimultaneously reports in Physical Review Letters its success in using a laser to accelerate lower-energy electrons.

Multi-Use Accelerators

Applications for these new particle accelerators would go well beyond particle physics research. Byer said laser accelerators could drive compact X-ray free-electron lasers, comparable to SLAC’s Linac Coherent Light Source, that are all-purpose tools for a wide range of research.

Another possible application is small, portable X-ray sources to improve medical care for people injured in combat, as well as provide more affordable medical imaging for hospitals and laboratories. That’s one of the goals of the Defense Advanced Research Projects Agency’s (DARPA) Advanced X-Ray Integrated Sources (AXiS) program, which partially funded this research. Primary funding for this research is from the DOE’s Office of Science.

SLAC's Joel England explains how the same fabrication techniques used for silicon computer microchips allowed their team to create the new laser-driven particle accelerator chips. (SLAC)

The study's lead authors were Stanford graduate students Edgar Peralta and Ken Soong. Peralta created the patterned fused silica chips in the Stanford Nanofabrication Facility. Soong implemented the high-precision laser optics for the experiment at SLAC’s Next Linear Collider Test Accelerator. Additional contributors included researchers from the University of California-Los Angeles and Tech-X Corp. in Boulder, Colo.

SLAC is a multi-program laboratory exploring frontier questions in photon science, astrophysics, particle physics and accelerator research. Located in Menlo Park, California, SLAC is operated by Stanford University for the U.S. Department of Energy Office of Science. To learn more, please visitwww.slac.stanford.edu.

DOE’s Office of Science is the single largest supporter of basic research in the physical sciences in the United States, and is working to address some of the most pressing challenges of our time. For more information, please visit science.energy.gov.

Citation: E. A. Peralta et al., Nature, 27 Sept 2013 (10.1038/nature12664)

Press Office Contact: Andy Freeberg, SLAC, afreeberg@slac.stanford.edu, (650) 926-4359

Scientist Contacts:

Press Office Contact: Andy Freeberg, SLAC, afreeberg@slac.stanford.edu, (650) 926-4359

Scientist Contacts:

Robert Byer, Stanford University, rlbyer@stanford.edu, (650) 723-0226

*This post was taken from http://www6.slac.stanford.edu/news/2013-09-27-accelerator-on-a-chip.aspx*

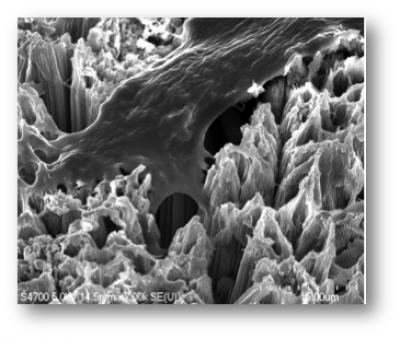

Dental implants that heal faster and fight infection

[+]

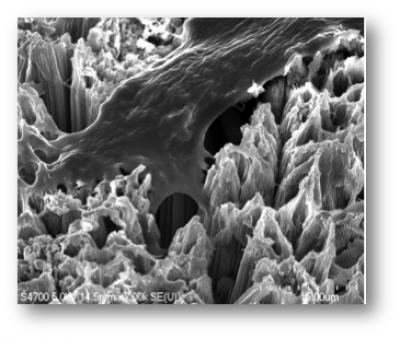

A bone cell anchoring itself to a surface of titanium dioxide nanotubes. Because osteoblasts (cells that synthesize bone tissue).readily adhere to this novel surface, dental implants coated with TiO2 nanotubes could significantly improve healing following dental implant surgery. (Credit: Tolou Shokuhfar/Michigan Technological University)

Michigan Technological University researchers have developed a way to use self-assembled titanium dioxide (TiO2) nanotubes to lower the rate of dental-implant failures.

Dental implants are posts, usually made of titanium, that are surgically placed into the jawbone and topped with artificial teeth.

While most dental implants are successful, a small percentage fail and either fall out or must be removed.

“There are two main issues that concern dentists: infection and separation from the bone,” said Tolou Shokuhfar, an assistant professor of mechanical engineering.

The mouth is a dirty place, so bacterial infections are a risk after implant surgery, and sometimes bone fails to heal securely around the device.

Because jawbones are somewhat thin and delicate, replacing a failed implant can be difficult, not to mention expensive. Generally, dentists charge between $2,000 and $4,000 to install a single implant, and the procedure is rarely covered by insurance.

[+]

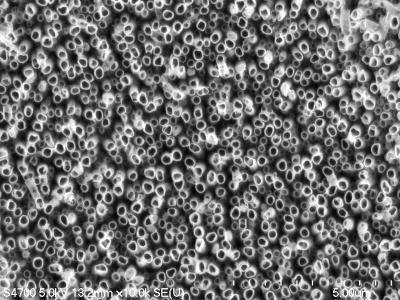

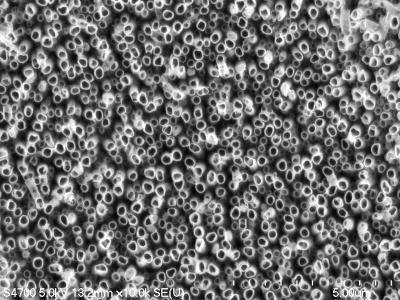

A forest of titanium dioxide nanotubes etched into metallic titanium. The surface may improve the longevity of dental implants. (Credit: Tolou Shokuhfar/Michigan Technological University)

Shokuhfar is now working with Cortino Sukotjo, a clinical assistant professor at the University of Illinois at Chicago (UIC) College of Dentistry on a dental implant with a surface made from TiO2 nanotubes, but she has been making and testing them for several years.

“We have done toxicity tests on the nanotubes, and not only did they not kill cells, they encouraged growth,” she said.

She has already demonstrated that bone cells grow more vigorously and adhere better to titanium coated with TiO2 nanotubes than to conventional titanium surfaces. That could keep more dental implants in place.

Drug delivery

The nanotubes can also be a drug delivery system. Shokuhfar’s team, in collaboration with Alexander Yarin, a professor in UIC’s Department of Mechanical and Industrial Engineering, loaded TiO2 nanotubes with the anti-inflammatory drug sodium naproxen and demonstrated that it could be released gradually after implant surgery.

That assures that the medicine gets where it’s needed, and it reduces the chances of unpleasant side effects that arise when a drug is injected or taken orally.

[+]

This cutaway view of a titanium dioxide nanotube reveals the drug sodium naproxen on the inside. On the surface of a dental implant, these nanotubes could release this anti-inflammatory drug or other pharmaceuticals that could speed healing. (Credit: Tolou Shokuhfar/Michigan Technological University)

To fight infection, the TiO2 nanotubes can also be laced with silver nanoparticles. Shokuhfar and Craig Friedrich, who holds the Richard and Bonnie Robbins Chair of Sustainable Design and Manufacturing at Michigan Tech, are conducting research, as yet unpublished, that is focused on orthopedic implants, such as artificial hips, but which also applies to dental implants.

“Silver has antimicrobial properties, and we are capable of obtaining a dose that can kill microbes but would not hurt healthy cells and tissues,” she said. In particular, it can help prevent biofilms, vast colonies of bacteria that can cover implants and be very difficult to eradicate. A nanotextured implant surface embedded with silver nanoparticles could prevent infection for the life of the implant.

The TiO2 nanotubes also have a cosmetic advantage: transparency. That’s a plus for any dental implant, but especially for a new type made from zirconia, which some patients choose because it is totally white.

Shokuhfar expects that implants with the new nanotubular surface will be easily assimilated into the market, since titanium implants, both dental and orthopedic, have a long history.

Shokuhfar and Friedrich have received a provisional patent and are working with two hospitals to further develop the technology and eventually license it. “As soon as the related paper work is taken care of and we get the FDA approval, the technology could be applied. However I am not aware how long all that would take,” she told KurzweilAI.

REFERENCES:

- Tolou Shokuhfar et al., Intercalation of anti-inflammatory drug molecules within TiO2 nanotubes, RSC Advances, 2013, DOI: 10.1039/C3RA42173B

- T. Shokuhfar et al., Biophysical Evaluation of Osteoblasts on TiO2 Nanotubes, Nanomedicine: Nanotechnology, Biology, and Medicine, 2011, Under Revision

- Patent: US 2013/0196128, COMPOSITIONS, METHODS AND DEVICES FOR GENERATING NANOTUBES ON A SURFACE

*All credit for this post goes to: http://www.kurzweilai.net/dental-implants-that-heal-faster-and-fight-infection *

Friday, September 6, 2013

Wednesday, September 4, 2013

HIV Vaccine

“HIV/AIDS has killed 35 million people worldwide, and more than 34 million people currently live with the virus infection. Since the virus was characterized in 1983, there have been numerous trials through pharmaceutical companies and academic institutions around the world to develop vaccines; however, no vaccine has been successful to date.”

Researchers from Western University in Canada and Sumagen Canada Inc have successfully completed Phase I Clinical Trial of (SAV CT 01) the first and only preventative HIV vaccine that is based on a genetically modified killed whole virus (SAV001-H) has shown to be successful in all patients with no adverse side-effects.

The vaccine is a prophylactic vaccine, meaning that is not a cure for people who are already living with the HIV/AIDS virus; but a preventative treatment for individuals that have not been infected by the HIV virus. In an interview, Dr. Chil-Yong Kang explains that the vaccine could be beneficial in suppressing the virus in people who are already have HIV or with hepatitis C.

“Other HIV vaccines evaluated through human clinical trials have focused on either one specific component of HIV as an antigen, genetic vaccine using recombinant DNA, or recombinant viruses carrying the HIV genes. Kang’s vaccine is unique in that it uses a killed whole HIV-1, much like the killed whole virus vaccines for polio, influenza, rabies and hepatitis A. The HIV-1 is genetically engineered so it is safer and can be produced in large quantities.”

Scientist are optimistic about the results from the Phase I trials, because the vaccine boosted the antibody production in HIV-positive volunteers, which raises suspicion that Phase 2 human clinical trials will yield a substantial increased immune systems response to the HIV virus.

"We have proven that there is no safety concern of SAV001-H in human administration and we are now prepared to take the next steps towards Phase II and Phase III clinical trials," said Dr. Dong Joon Kim in the official release. "We are delighted to be one step closer to the first commercialized HIV vaccine."

“Sumagen anticipates not only having the first HIV vaccine in market but also the eradication of HIV/AIDS for human beings.”

Source: http://communications.uwo.ca/media/releases/2013/September/no_adverse_effects_in_volunteers_following_phase_i_clinical_trial_of_sumagen_aids_vaccine.html

Researchers from Western University in Canada and Sumagen Canada Inc have successfully completed Phase I Clinical Trial of (SAV CT 01) the first and only preventative HIV vaccine that is based on a genetically modified killed whole virus (SAV001-H) has shown to be successful in all patients with no adverse side-effects.

The vaccine is a prophylactic vaccine, meaning that is not a cure for people who are already living with the HIV/AIDS virus; but a preventative treatment for individuals that have not been infected by the HIV virus. In an interview, Dr. Chil-Yong Kang explains that the vaccine could be beneficial in suppressing the virus in people who are already have HIV or with hepatitis C.

“Other HIV vaccines evaluated through human clinical trials have focused on either one specific component of HIV as an antigen, genetic vaccine using recombinant DNA, or recombinant viruses carrying the HIV genes. Kang’s vaccine is unique in that it uses a killed whole HIV-1, much like the killed whole virus vaccines for polio, influenza, rabies and hepatitis A. The HIV-1 is genetically engineered so it is safer and can be produced in large quantities.”

Scientist are optimistic about the results from the Phase I trials, because the vaccine boosted the antibody production in HIV-positive volunteers, which raises suspicion that Phase 2 human clinical trials will yield a substantial increased immune systems response to the HIV virus.

"We have proven that there is no safety concern of SAV001-H in human administration and we are now prepared to take the next steps towards Phase II and Phase III clinical trials," said Dr. Dong Joon Kim in the official release. "We are delighted to be one step closer to the first commercialized HIV vaccine."

“Sumagen anticipates not only having the first HIV vaccine in market but also the eradication of HIV/AIDS for human beings.”

Source: http://communications.uwo.ca/media/releases/2013/September/no_adverse_effects_in_volunteers_following_phase_i_clinical_trial_of_sumagen_aids_vaccine.html

Friday, August 30, 2013

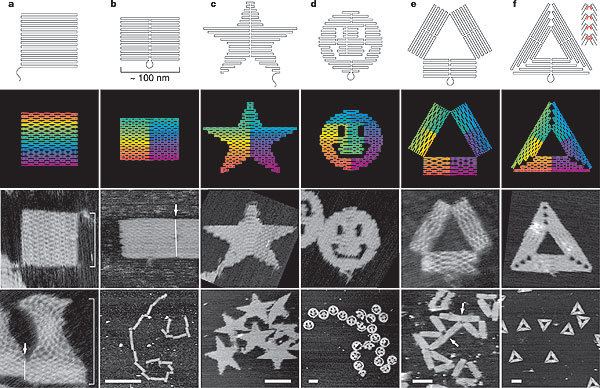

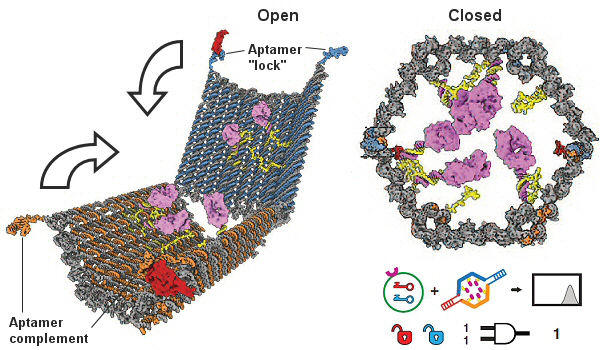

DNA Nanorobots: A New Method For Treating Cancer

Kevin Russell and myself had the opportunity to interview Ph.D Ido Bachelet from the Bar-Iran Institute of Nanotechnology and Advanced Materials. Dr. Bachelet and his team are developing a new form of cancer delivery system that has the potential to eradicate cancerous tissue from the body without damaging healthy cells.

However, before I begin, it’s important to understand that all of the technologies we are going to discuss are not science fiction, but science reality.

DNA origami is a technique that allows scientist to use DNA molecules as programmable building blocks, which make use of the programmable molecular recognition of complementary DNA cohesion to assemble designed structures. By taking a single strand of DNA, scientist are able to manipulate the genetic code, telling the DNA to self-assemble into predetermined shapes. In order to do this, scientist use software that is similar to CAD. It programs the DNA and tells it to fold back and forth into a desired shape or pattern.

Almost seven years after the original technique of DNA origami was developed by Paul Rothemund at the California Institute of Technology, Dr. Ido Bachelet and his team evolved the concept of DNA origami into a radical new drug delivery system. In Dr. Bachelet’s recent publication ‘Designing a bio-responsive robot from DNA origami‘ his team was able to take the genome of a virus as the primary building block of his structure and create a cage like scaffolding that has the capability to house life promoting drugs such as antibiotics and chemotherapy medicines.

However, these nanorobots not only have the ability to house powerful medicines; they can also deliver the drugs to the precise location that requires healing.

The current version of these nanorobots are free floating robots that float through the bloodstream by the billions and remain neutral until they encounter a location that requires assistance. The nanorobots know that they have reached the proper location by molecular cues that are programmed into them to move from their closed neutral state to its open state (See image 2 below). These molecular cues act as the key to activate the neutralized nanorobot into combat ready mode, and tell it to treat the infection site, delivering the drugs directly to the cancerous spot or site of infection.

Currently, one of the primary problem with chemotherapy is that the drugs being injected into the patient are not only killing the rogue cancerous cells but healthy cells as well. By taking a sample of the cancerous cells, or by knowing the specific molecular markers of the rogue cells, scientists are able to program the nanorobots to only attack the enemy cells with a specific payload.

The idea is that the nanorobots don’t excrete the drug or release it. Instead, they make the drug accessible or inaccessible by turning it on and off. Because the drug is linked to the robot, one could think of it as a sword and the wielder. As the nanobot prepares to attack the cell that it was programmed to destroy, it enables its sword (the drug), that attacks the cell and then sheaths the drug again, leaving all of the healthy cells around the infection site unaffected by the potent chemotherapy drugs. Once could also think of this technology as predator dronedrone that have the ability to hone in and wipe out any enemy insurgents while leaving the healthy citizen population unaffected by the combat.

I’m sure some of you are asking ‘what happens when these nanorobots have achieved their objective? I don’t want millions maybe even billions of loaded nanorobots with powerful chemo drugs floating in my body.’ The nanorobots have a half-life of an hour or two, but scientist can modify them to live up to 3 days before they start the disintegration process, which is via enzymes. These enzymes slowly start to form segregates about a half-micron in size (size of bacteria). As they slowly dismantle the nanobot, the payload is gradually released into the body at non-lethal doses until the enzymes have completed their task of disassembling, leaving the body free of the cancer and of any nanorobots.

FUTURE IMPLICATIONS

The current model of nanobots are extremely efficient in disengaging certain types of cells or delivering payloads to specific sites in the body. However, for diseases such as Alzheimer’s disease or Parkinson’s disease, where the body suffers a death on the molecular level, these nanobots are non-effective. In the future, it is possible that we will see an all-in-one nanorobot package. These nanobots would not only have the ability to destroy cells but promote the rejuvenation of cells without increasing likelihood of tumors or cancers as well.

Another additional future functionality that we will see in coming nanorobot versions is the ability to direct or steer nano particles to the precise location that requires treatment. Technically, this would be creating a new surgeon; the Nanorobot Surgeon. These doctors would have the ability to cut, stitch, and sample cells without ever having to perform what we consider modern day surgery. Dr. Bachelet and his team have already connected these nanorobots to an Xbox controller, acting as the conductor to a symphony of nanorobots working in unison to eradicate cancerous cells. These systems of controlling these nanorobots will grow in complexity and sophistication, completely changing the coming face of healthcare around the world.

Labels:

2030,

2045,

advanced technology,

Brandon Tomlin,

cure for cancer,

DNA,

DNA origami,

future of health care,

future societies,

future technology,

futurology,

nanobots,

Nanorobots,

nanotechnology

Thursday, August 22, 2013

Wednesday, August 21, 2013

Saturday, August 17, 2013

Your next GUI will be a BUI

When the common language of computers was first being established, engineers had to agree that the piece of code 1101 was the equivalent to an A (example). This collaboration helped create a standard working model that current and future developers could use to build off of. As computers continued to grown in sophistication, so did the standardized models that helped current developers push new software developments without having to rebuild the wheel.

After the standard model of computer architecture was firmly established in the computing community, a new method for operating computers started to manifest itself in the form of a Graphical User Interface (GUI). This GUI was a new and exciting development, and was one of the major launching points to the personal computer. However, this was the first GUI in development, and would be consumed by a mass audience that more than likely had never seen a computer, let alone a GUI before. Developers had to create a standard graphical model that allowed the end users, no matter what GUI they might be operating, to have a standard subconscious model of how this system operated. They did this by giving them an idea of how one GUI relates to another, and how to complete simple task with little cognitive strain.The GUI started to integrate itself into society and take a concrete form, new touch technologies began to be launched for mass consumption, repeating the same process as the GUI. This created a standard model of touch technology that set a precedence for what hand gestures represented for a certain input command to the device. This allowed for the standardized gestures to be adopted for mass integration into all touch technology. For example, the thumb and index finger coming together represents a close or zone out command for the device.

With the development of EEGs and new brain to computer technologies, a new standardized model needs to be developed for how to think and operate these new emerging technologies. For example, to operate a thought-guided helicopter that Professor Bin He and his team created, your EEG patterns or the thoughts that you are thinking to maneuver the device need to be calibrated to the computer. So, before you could begin to operate the helicopter, you would have to have the computer register what you are thinking for up, down, left, and right. Normally, to do this, most people either think of an object or color. Green for take off, red for stop, and a random object or color for left and right.process needs to take place in order for the device to understand what you are trying to convey. This is why a Brain User Interface (BUI) needs to be developed as a standard natural model to translate what we are trying to communicate to our devices. A standard operating procedure such as this would help standardized how to think and control our technologies with our mind until we are able to develop true mind reading technologies. This would lay down a foundation that is similar to gesture and GUI models so that the mass audience could adopt and apply the same ‘thought principles’ to all EEG devices. It was create out of the box devices that require little EEG calibration and operate on the same thought-principles as every other EEG device.

After the standard model of computer architecture was firmly established in the computing community, a new method for operating computers started to manifest itself in the form of a Graphical User Interface (GUI). This GUI was a new and exciting development, and was one of the major launching points to the personal computer. However, this was the first GUI in development, and would be consumed by a mass audience that more than likely had never seen a computer, let alone a GUI before. Developers had to create a standard graphical model that allowed the end users, no matter what GUI they might be operating, to have a standard subconscious model of how this system operated. They did this by giving them an idea of how one GUI relates to another, and how to complete simple task with little cognitive strain.The GUI started to integrate itself into society and take a concrete form, new touch technologies began to be launched for mass consumption, repeating the same process as the GUI. This created a standard model of touch technology that set a precedence for what hand gestures represented for a certain input command to the device. This allowed for the standardized gestures to be adopted for mass integration into all touch technology. For example, the thumb and index finger coming together represents a close or zone out command for the device.

With the development of EEGs and new brain to computer technologies, a new standardized model needs to be developed for how to think and operate these new emerging technologies. For example, to operate a thought-guided helicopter that Professor Bin He and his team created, your EEG patterns or the thoughts that you are thinking to maneuver the device need to be calibrated to the computer. So, before you could begin to operate the helicopter, you would have to have the computer register what you are thinking for up, down, left, and right. Normally, to do this, most people either think of an object or color. Green for take off, red for stop, and a random object or color for left and right.process needs to take place in order for the device to understand what you are trying to convey. This is why a Brain User Interface (BUI) needs to be developed as a standard natural model to translate what we are trying to communicate to our devices. A standard operating procedure such as this would help standardized how to think and control our technologies with our mind until we are able to develop true mind reading technologies. This would lay down a foundation that is similar to gesture and GUI models so that the mass audience could adopt and apply the same ‘thought principles’ to all EEG devices. It was create out of the box devices that require little EEG calibration and operate on the same thought-principles as every other EEG device.

Friday, August 16, 2013

"Singularity Terrorism: Military Meta-Strategy in Response to Terror and Technology"

"Can the same strategies and tactics, coupled with radically empowering and decentralized technologies, be put to use by militaries to similarly disrupt the patterns of terrorists themselves?" -- Read the full article here

Tuesday, August 6, 2013

The Psychology of Failed Predictions

Psychology plays a role in every decision that we make, to choosing a mate and starting a family, to the advertisements that persuade us in purchasing the latest electronics. But, what role does psychology play when it comes to our ability to predict world events or the latest emerging technologies?

Is it possible that we are limited to certain psychological barriers that hinder our ability to reasonably survey and forecast future events? In a new study by Machine Intelligence Research Institute (MIRI) describes ‘How We’re Predicting AI – or Failing To’ by Stuart Armstrong and Kaj Sotala. It shows that there is substantial evidence for suspicion when it comes to our ability to predict future events. According to the data in the study, it is possible that forecasters could struggle with subconscious psychological predispositions that hinder their ability generate successful forecast.

This study was not limited to expert forecast, but also included the forecast of non-experts, and cites data that shows there is an indistinguishable difference between the predictions of expert and non-expert forecasters.

Figure 1: “Median estimate for human-level AI, graphed against date of prediction” (1).

One of the most common mistake that expert and non-expert forecasters make is the so-called Maes-Garreau Law formulated by Kevin Kelly that states, forecasters will predict that a certain event with happen within their life time. “In this case, the rise of AI that will save them from their own deaths, akin to a Rapture of the Nerds” (1), a second mistake that forecasters make is that ‘event X is within 20 years’. Figure 2: “Difference between the predicted time to AI and the predictor’s life expectancy, graphed against the predictor’s age” (1).

In order to compile and execute more accurate forecast and predictions, it is important that we understand our psychological procedures that could obstruct our vision of the future. By doing so, it could allow us to peer into the future with unbiased eyes, and a fresh perspective of what is logically possible according to our data, and not blindly project our ego onto our scenarios and forecast.

SOURCE: (1) HTTP://INTELLIGENCE.ORG/FILES/PREDICTINGAI.PDF

Figure 1: “Median estimate for human-level AI, graphed against date of prediction” (1).

One of the most common mistake that expert and non-expert forecasters make is the so-called Maes-Garreau Law formulated by Kevin Kelly that states, forecasters will predict that a certain event with happen within their life time. “In this case, the rise of AI that will save them from their own deaths, akin to a Rapture of the Nerds” (1), a second mistake that forecasters make is that ‘event X is within 20 years’. Figure 2: “Difference between the predicted time to AI and the predictor’s life expectancy, graphed against the predictor’s age” (1).

In order to compile and execute more accurate forecast and predictions, it is important that we understand our psychological procedures that could obstruct our vision of the future. By doing so, it could allow us to peer into the future with unbiased eyes, and a fresh perspective of what is logically possible according to our data, and not blindly project our ego onto our scenarios and forecast.

SOURCE: (1) HTTP://INTELLIGENCE.ORG/FILES/PREDICTINGAI.PDF

Wednesday, July 31, 2013

Tuesday, July 30, 2013

The Extinction Of The Pure Human

There is no such thing as the Human, only the Transhuman.

We are all transhuman. The transhumanism spectrum varies in

magnitude, and so does the way that the transhuman philosophy is implemented in

the lives of the common man. To help us better understand why we are all

transhuman, and why most of the human species has already disposed of their

pure human form, we need to understand the current working definition of

transhumanism from Max More.

“Transhumanism is a class of philosophies of life that seek the

continuation and acceleration of the evolution of intelligent life beyond its

currently human form and human limitations by means of science and technology,

guided by life-promoting principles and values.” (Max More 1990) (1).

According to this definition, we are using science and technology

as a catalyst to accelerate human evolution and intelligence to exceed human

limitations. Furthermore, we are able to manipulate our environment, and

enhance our genetic composition. Thus, by using any type of enhancement to

improve the quality of life, pushing the boundaries of human limitations would

ultimately fall into the transhumanism philosophical category.

By using this definition of Transhumanism, at what point do we

make the transition from pre-programmed evolutionary mechanisms to the

transhuman being? Do we become transhuman when our parents placed the first

electronic gadget into our hands? Perhaps, when we received our first

“life-promoting” vaccines such as the hepatitis A, B, or the influenza vaccine?

My answer is no. We become transhuman much earlier. We shed our pure

human form and become transhuman in the womb; through the use of genetically

engineered foods, iodized salt, and prenatal pills (2).

All of these standardized health procedures are all forms of

technology that strip away our pure evolutionary form, and converts us into transhuman

beings. Today, we only consider people transhuman if they carry some sort of

advanced gadgetry or ingest advanced neural enhancing pills. However, those are

all extreme cases of transhumanism, and as technology continues to merge with

biological beings, the extreme spectrums of transhumanism will be pushed

further out. Transhuman practices of today will become standard practices in

future societies. This gives us the ability to take our evolutionary destiny

into our own hands, creating a being that is conscious of it’s evolutionary

development.

Even though the transition into worldwide transhumanism was a

subtle metamorphosis, most people do not consider themselves transhuman. It is

advisable to expect a similar evolution when contemplating the progression of

posthumanism. As humans, it is natural to strive to improve the quality of life

for ourselves and for our children. Survival is our most basic biological need.

Because of this it is reasonable to suspect that the same subconscious actions

will be take when moving from the transhuman state to the posthuman state.

Sources:

(1)

http://humanityplus.org/philosophy/transhumanist-faq/#answer_19

(2)

http://blogs.discovermagazine.com/crux/2013/07/23/how-adding-iodine-to-salt-boosted-americans-iq/

Thursday, July 25, 2013

The History and Future of Computer Input.

As technology increases in complexity with more servers

crunching more data, shouldn’t the way the humans interact with computers also

grow in complexity? While also ameliorating to a state where complex objectives

can be executed without equally complicated input?

As the vastness and the interconnectedness of computer systems ingrains itself into ever-complex systems. The way that we input data into thus said systems needs to evolve with increasing complexity to compensate for the amount of human effort that would be required to operate these future systems.

As the vastness and the interconnectedness of computer systems ingrains itself into ever-complex systems. The way that we input data into thus said systems needs to evolve with increasing complexity to compensate for the amount of human effort that would be required to operate these future systems.

The way that our species has historically interacted with

computer systems has experienced little to no growth in its complexity and simplification

– until recently.

Where engineers and computer scientist are making great strides in the development and implementation of natural language systems, neural input, and artificial intelligence. These technologies are merging together to create a new way to interact with technology

Where engineers and computer scientist are making great strides in the development and implementation of natural language systems, neural input, and artificial intelligence. These technologies are merging together to create a new way to interact with technology

The objective of this article is to give the reader an

understanding of the history of input and where the future of input is heading

in the coming years and decades.

One example of the stagnation in the evolution of input is the QWERTY keyboard, which has been the golden standard of data input since the mid 1900s. However, in the last 5 to 10 years we have started witness the mergence of two new types of new data input.

One example of the stagnation in the evolution of input is the QWERTY keyboard, which has been the golden standard of data input since the mid 1900s. However, in the last 5 to 10 years we have started witness the mergence of two new types of new data input.

(The data points on the graph represent a new type of input or a substantial evolution in a certain input technology. Each technology is graphed out in chronological order. The data in the chart will be provided at the end of the article.)

In the graph Rate of

Input Change Throughout History in 1866 the first teletype machines or

keypunches were invented as a means to program software. These keypunches were

slow, difficult to correct errors, and required armies of office soldiers to

hole punch cards to create software with limited functionality. Leaving its

growth to be fairly consistent until the 1946 when the first computer keyboards

were adopted from the punch card and early teletype machines.

In 1946 the Eniac computer used a punched card reader as its input and output device. Then, in 1948, the Binac computer used electromechanically controlled typewriter to input data directly onto magnetic tape for feeding the computer data and to print the results.

In 1946 the Eniac computer used a punched card reader as its input and output device. Then, in 1948, the Binac computer used electromechanically controlled typewriter to input data directly onto magnetic tape for feeding the computer data and to print the results.

Now that we have established a limited part of the history

of input from a tactile perspective, which will be identified as the first wave

of technological develop; it is important to note that the second wave of

technological development does not begin at the end of first phase, but in the

middle. This allows for constant

advancement without causing technological development to become stagnate and falter.

By 1936 the first ‘electronic speech synthesizer ‘ was

created by AT&T Bell Labs that produced the first electronic speech

synthesizer called “Voder” by Dundley, Riesz and Watkins. This allowed for the first phase of

tactile input of computer systems to continue onto the top of the S curve,

where a technology is ‘matured’ and experiences little to no significant

growth, while the second phase of technological growth beings its exponential

cycle, and takes over the first phase of development. Giving rise to a more

efficient technology, one could compare this type of development to Darwin’s

theory of evolution. (To learn more about the evolution of technology see Methods of Futuring part 2)'

By 1971 DARPA (Defense Advanced Research Projects Agency)

established the ‘Speech Understanding Research’ (SUR) program with the

objective to develop a computer system that could understand continuous speech,

which received $3 million per year of government funding for 5 years. From this

initiative several project groups were erected. Such as CMU, SRI, MIT Lincoln

Laboratory, Systems Development Corporations (SDS), and Bolt (See graph for

sources).

(The

graph above is the growth of voice technology and neural implants from 1930 to

2000.)

Fast forward 11 years to 1982, Dragon systems releases its

first language technology by its two founders, Drs. Jim and Janet Baker. Just

13 years later they release dictation speech recognition technology, allowing

the public for the first time to dictate natural language to a computer system.

In 2000 the first world-wide voice portal was created by

Tellme, and just 3 years later, healthcare is radically impacted by highly

accurate speech recognition. This

leaves us with a continuous growth of voice recognition software that gives us the

current day technology of Watson, Siri, and Google’s voice recognition

technologies.

This leaves us with highly integrated computer systems that can execute extremely complicated mathematical calculations and deliver rapid explanations that are easy for humans to digest. In the next few years, it is possible to have a cloud service that allows us to speak naturally to our devices and implement commands that would take us several minutes, if not hours to program or research for a simple answer.

This leaves us with highly integrated computer systems that can execute extremely complicated mathematical calculations and deliver rapid explanations that are easy for humans to digest. In the next few years, it is possible to have a cloud service that allows us to speak naturally to our devices and implement commands that would take us several minutes, if not hours to program or research for a simple answer.

To give an example of what type of functionality such a

system could execute.

(user speaking to computer) “Computer: what are the top

three diseases that are associated with my family’s medical history that are

neurological, and what is my likelihood of developing one of these mental

disorders?”

By 1978 the third phase of new technological input development started to gain traction. Dobelle’s first prototype was implanted into “Jerry” a man blinded in adulthood. In 1978 a single-array BCI containing 68 electrodes was implanted onto Jerry’s visual cortex and succeeded in producing phosphenes, the sensation of seeing light. In 1998, 20 years after “Jerry”, Johnny Ray (1944-2002) suffered from ‘locked-in syndrome’ after suffering a brain-stem stroke in 1997. One year later he under went a surgery that placed a brain implant that allowed him to control a computer cursor with pure thought.

By 1978 the third phase of new technological input development started to gain traction. Dobelle’s first prototype was implanted into “Jerry” a man blinded in adulthood. In 1978 a single-array BCI containing 68 electrodes was implanted onto Jerry’s visual cortex and succeeded in producing phosphenes, the sensation of seeing light. In 1998, 20 years after “Jerry”, Johnny Ray (1944-2002) suffered from ‘locked-in syndrome’ after suffering a brain-stem stroke in 1997. One year later he under went a surgery that placed a brain implant that allowed him to control a computer cursor with pure thought.

Just 2 years after Ray’s implant, a team of researchers succeeded in

building a BCI that reproduced a monkey’s movements; while the monkey operated

a joystick or reached for food. The BCI operated in real time and could also

control a separate robot remotely over the Internet. However, the monkeys were not

able to see the arm moving and did not receive any feedback from the arm.

In 2005 Matt Nagle became the first person to control an

artificial hand using a BCI as part of the first nine-month human trial of

Cyberkinetic’s BrainGate chip-implant. The chip was implanted into Nagle’s

right precentral gyrus (area of the motor cortex responsible for arm movement),

the 96-electrode BrainGate implant allowed Nagle to control a robotic arm by

thinking about moving his hand along with other electronic devices such as a

computer cursor, lights and TV.

One year later the Altran Foundation for Innovation developed

Brain Computer Interface with electrodes located on the surface of the skull,

instead of directly in the brain that requires surgery.

Fast forward 7 years; we now have caps equipped with EEG

sensors that are sensitive enough to detach the EEG waves through the cranium to

determine when a user commands, (left, right, up, down), giving the person the

ability to control a helicopter with their mind.

(The

graph above is from 1995 to 2013 with primary the growth of neural implants and

EEG readers that function as an input device.)

FUTURE IMPLICATIONS: THE

INVISIBLE FOURTH TECHNOLICAL PARADIGM OF INPUT

Fourth phase of technological growth that is happening in parallel with phase two and three, combining natural language, neural implants, and A.I. . Natural language and neural implants will increase our ability to accomplish complex tasks. However, this data will be incomprehensible for our biological brains to process. Instead, we will merge our biological, and synthetic implants with a God-like artificial intelligence to accomplish ever-sophisticated commands in a simplistic way that our biological intelligence can understand.

Fourth phase of technological growth that is happening in parallel with phase two and three, combining natural language, neural implants, and A.I. . Natural language and neural implants will increase our ability to accomplish complex tasks. However, this data will be incomprehensible for our biological brains to process. Instead, we will merge our biological, and synthetic implants with a God-like artificial intelligence to accomplish ever-sophisticated commands in a simplistic way that our biological intelligence can understand.

Leading to the final phase of computer input, where an

artificial intelligence that is integrated not only into our brains, but also

in our minds, giving humans the ability to program complex systems from pure

thought. However, technology such

as this will not be available until all three phases of computer input have

fully matured, and our understanding of the brain and mind has evolved into a

more concrete and fully understood science.

Sources: All source information and data can be found in the

Google Doc with data from the graphs, and where I compiled all of my data.

This document will be left open to the public to add and

manipulate data points to create a more in depth picture of where the future of

input is heading.

Please feel free to add your predictions to the excel document.

Link to locked document: https://docs.google.com/spreadsheet/ccc?key=0AlCsYSuSapuZdGFEOHo4dzJoY1JGRXVCdUl0dzhhdWc&usp=sharing

Link to open document: https://docs.google.com/spreadsheet/ccc?key=0AlCsYSuSapuZdExHQTJxQldUbk1ReWJpNVlmcnhTd3c&usp=sharingSaturday, July 20, 2013

What if Google bought Detroit?

What if Google bought Detroit? Is it financially possible, and if so, what would Google do with an entire city?

According to Yahoo! Finance, Google’s market cap is approximately $297.46 billion dollars as of July 20th 2013. An article written by usatoday.com describes how Detroit’s bankruptcy is one of the largest of its kind in U.S history. With a population of approximately 700,000, Detroit debts and liabilities could reach as high as $20 billion dollars.

Google most definitely has enough purchasing power to bail out the city of Detroit, but what would it do with a whole city? Historically Detroit has been the heart of car manufacturing, where Henry Ford invented what we consider the modern day assembly line. Thus, Google could convince the existing car manufacturers to start producing Google Cars and a premium rate. However, Google could convince any manufacturing company to produce their driver-less cars at a premium rate.

What would be appealing to Google would be the ability to produce city wide legislature that allowed them to use the entire city of Detroit as real life testing ground for all of their technologies without having to comply to city laws and regulations. This would allow them to test cutting edge technologies in everyday scenarios. It would also present the authority needed to re-imagine how a city operates on an information level, and not only to test their driver-less cars, but test products such as mobile commerce, free public internet and free public transportation as well.

Most importantly though, Detroit could become an example to other cities across the United States of how to develop a sustainable city using groundbreaking technology that would normally get stuck in the standard bureaucratic processes. It also may radically changing our perspectives on education, transportation, green energy, and public policy.

Having a city such as this would draw in leading minds from all around the world, including scientist, engineers, coder, IT experts, and green architects. These individuals could present groundbreaking ideas and test their new technologies on a laboratory scale that has been historically unprecedented. Taking Detroit from the automotive center of the United States and transforming it into an innovative technological hub could challenge the authority of Silicon Valley as the most technologically inventive city in the world.

What do you think? Share your opinion below in the comments section.

*This article is a hypothetical scenario, and not a call to action.*

Sunday, June 23, 2013

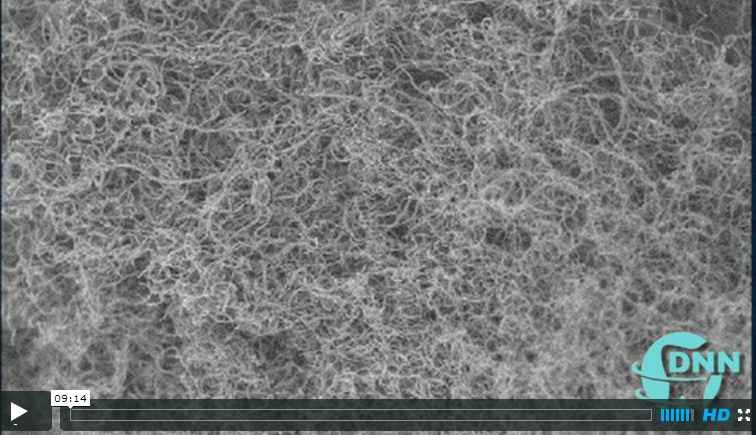

End of Moore's Law

-->

According to Moores Law the

number of transistors on a integrated circuit doubles approximately every two

years (18 months). Gordron E. Moore described his law in this 1965 paper ‘Cramming more components onto integrated

circuits’ in the Electronics Magazine.

Because of this exponential growth over the last 48 years, this doubling has lead to 24 doublings of the orginal number of transitors that could be placed on a circuit board. Moore’s law is

starting to buckle, because transistors based on semiconductors can only get so

small.

"At the rate the current

technology is progressing, in 10 or 20 years, they won't be able to get any

smaller," said physicist Yoke Khin Yap of Michigan Technological

University. Not only is the current technology starting to reach the mature phase

of its growth (top of the S curve), but semiconductors also have another

disadvantage: they waste an exorbitant amount of energy in the form of heat.

Over the last few decades

scientist have experimented with different materials and molecule designs to continue

Moore’s Law of exponential growth. However, these scientists have

continued to experiment with semiconductor similar silicon. Dr. Yap wanted to try

something novel, something that might open the floodgates for a new age of

electronics.

"The idea was to make a

transistor using a nanoscale insulator with nanoscale metals on top," he

said. "In principle, you could get a piece of plastic and spread a handful

of metal powders on top to make the devices, if you do it right. But we were

trying to create it in nanoscale, so we chose a nanoscale insulator, boron

nitride nanotubes, or BNNTs for the substrate."

Yap’s research team had figured

out how to make a “virtual carpet” of BNNTS, which happen to be insulators

(that are highly resistant to electrical charge). By using a laser, the team

placed quantum dots (QDs) of gold as small as three nanometers across on top of

the BNNTs, forming QB-BNNTs.

When Yaps and Oak Ridge National Laboratory (ORNL) an

organization that Yap’s team collaborated with fired electrons through both ends of the QB-BNNTs at room

temperature. The electrons jumped with precision from gold dot to gold

dot. By a phenomenon known as quantum tunneling.

"Imagine that the nanotubes

are a river, with an electrode on each bank. Now imagine some very tiny

stepping stones across the river," said Yap. "The electrons hopped

between the gold stepping stones. The stones are so small, you can only get one

electron on the stone at a time. Every electron is passing the same way, so the

device is always stable."

Yap’s team had made a transistor

without using a semiconductor. When sufficient voltage was applied, it switched

to a conducting state, and when the voltage was low or turned off, it reverted

to its natural state as an insulator. During this process there was no leakage.

Meaning, no electrons from the gold dots escaped into the insulating BNNTs,

allowing the tunneling channel to remain at a cool temperature, while silicon

is subject to leakage, that waste energy and generates a lot of excess heat.

The method that separates Yap’s

success to others who have tried to exploit quantum tunneling is that Yaps

gold-and-nanotube device is its submicroscopic size: One micron long and about

20 nanometers wide. “The gold

islands have to be on the order of nanometers across to control the electrons

at room temperature," Jaszczak said. "If they are too big, too many

electrons can flow." In this case, smaller is truly better: "Working

with nanotubes and quantum dots gets you to the scale you want for electronic

devices."

"Theoretically, these

tunneling channels can be miniaturized into virtually zero dimension when the

distance between electrodes is reduced to a small fraction of a micron,"

said Yap.

FUTURE IMPLICATIONS

With Moore’s Law is coming to an

end in the next 10 to 20 years, a new technology must raise to take its place

to continue the technological development that has been seen in the last 50

years. In the future, we are going to see more scientists using quantum

phenomena to over come the traditional physical barriers that are starting to

loom and threaten technological development. Yap’s method could continue Moore’s law

along with creating more power efficient devices that could go days without

being charged.

Friday, June 21, 2013

The Last Human

Despairingly he replied ”From where I come, I can go no more." Dispirited, his words slip from his human lips and rang with sorrow. "Our skys are red, vast blue oceans lay dried in their beds. The mountains shaded in rust of red, deserts wish they too could be dead.”

Monday, June 10, 2013

Apple WWDC 2013: Anki Drive Merging the Physical World with the Digital

Today at WWDC keynote speech this morning apple announced something quite interesting: a new company called Anki Drive, which is centered around Artificial Intelligence and robotics. These robots communicate with Bluetooth LE. The little race cars that were presented at the show can sense the track up to 500 times a second giving them a smooth and fluid motion that simulates real life driving. As the cars speed around the track, they can communicate with each other, understanding each other’s position on the track and anticipate the motion of the surrounding cars.

Not only can these cars detect each other’s presents on the track, but they can also communicate and work together as a unit to complete a set objective. For instance, there were four cars on the track, and the three leading cars were told to block, or prevent the last cars from taking the lead. To complete this task, all three leading cars worked as a unit to prevent the last car from obtaining the lead position. All of these commands were given wirelessly to the robotic cars from an iPhone application. The iOS exclusive game available as a beta in the App Store today, where the full release wont is released until this fall.

FUTURE IMPLICATIONS:

The physical world in the next 5-10 years is going to drastically start merging with the world the digital. With the implication of small electronic parts, we are going to have the ability to connect with and manipulate physical objects with electronic mediums such as our phones, tablets, and computers. However, this is only the first stage of this type of technology being available to the masses. With more physical objects joining the inner-connectiveness of the digital world, we could possible be able to ‘login’ to any physical object on the face of the earth and manipulate it to our will.

However, Serious Wonder has already covered technological breakthroughs such as this. But with devices with the ability to detect computationally understands brain waves that can be translated into motion for physical objects. Just the mere thought of moving an object can man it manifest in real life. Although this technology is still in it’s infancy, according to the law of accelerating returns this new technology with grow with exponentially, and will bring in a third paradigm of how we interact with technology. The first being with tactile touch: keyboards touch screens, the second: voice commands, and the third: neurological input.

Tuesday, June 4, 2013

Scientist Find the Sorcerer's Stone: Turning Liquid Cement into Metal

Ancient Alchemist were in search of the great sorcerer's stone, today,

using electron strapping, scientist have found a way to convert ordinary

cement into metal. Not only are scientist able to convert liquid cement

into liquid metal, but also take a non-electrically conductive material,

and turn it into one that his highly conductive. This phenomenon of

trapping electrons and turning liquid cement into liquid metal was

recently discovered, but not fully understood until now. By knowing how

this process takes places, scientist now know the conditions needed to

create and trap electrons in materials, and by doing this, they are

able to develop and test other materials to find out if we can make

them conduct electricity using this method.

“This new material has lots of applications, including as thin-film resistors used in liquid-crystal displays, basically the flat panel computer monitor that you are probably reading this from at the moment,” said Chris Benmore, a physicist from the U.S. Department of Energy’s (DOE).

The results were reported May 27 in the journal the Proceeding of the National Academy of Sciences in the article "Network topology for the formation of solvated electrons in binary CaO-Al2O3 composition glasses."

“The team of scientists studied mayenite, a component of alumina cement made of calcium and aluminum oxides. They melted it at temperatures of 2,000 degrees Celsius using an aerodynamic levitator with carbon dioxide laser beam heating. The material was processed in different atmospheres to control the way that oxygen bonds in the resulting glass. The levitator keeps the hot liquid from touching any container surfaces and forming crystals. This let the liquid cool into glassy state that can trap electrons in the way needed for electronic conduction.”

Scientist discovered that if the conductivity was created when the free electrons were trapped in the cage-like structures that formed in the glass. Then the trapped electrons provided a mechanism for conductive conductivity similar to the mechanism that happens in meals.

FUTURE IMPLICATIONS:

The method could possibly one day be applied directly to 3D printers. Giving engineers the ability to print intricate electronic designs that could be used in everyday electronics. However, this scientific development not only applies on earth. But also in space – the compounds and elements that are found in cement can also be found on the moon.

By applying the same method that we use on earth, we could possibly build large enough 3D printers that use this process to help build and establish a moon colony. If this method could be perfected, by 2023 when we hope to send people to Mars to establish a permanent Mars colony, this method could be one of the biggest economical driving forces of expanding the mars colonies without requiring substantial financial support from earth.

Not only could this method be used in the development of space colonies, but also transforming readily available resources into precious metals and other rare resources. This method in the years to come could change the way that we view global resources and our ability to access them. Opening and door to global development, and hopefully world peace.

Source: http://www.anl.gov/articles/formula-turning-cement-metal

“This new material has lots of applications, including as thin-film resistors used in liquid-crystal displays, basically the flat panel computer monitor that you are probably reading this from at the moment,” said Chris Benmore, a physicist from the U.S. Department of Energy’s (DOE).

The results were reported May 27 in the journal the Proceeding of the National Academy of Sciences in the article "Network topology for the formation of solvated electrons in binary CaO-Al2O3 composition glasses."

“The team of scientists studied mayenite, a component of alumina cement made of calcium and aluminum oxides. They melted it at temperatures of 2,000 degrees Celsius using an aerodynamic levitator with carbon dioxide laser beam heating. The material was processed in different atmospheres to control the way that oxygen bonds in the resulting glass. The levitator keeps the hot liquid from touching any container surfaces and forming crystals. This let the liquid cool into glassy state that can trap electrons in the way needed for electronic conduction.”

Scientist discovered that if the conductivity was created when the free electrons were trapped in the cage-like structures that formed in the glass. Then the trapped electrons provided a mechanism for conductive conductivity similar to the mechanism that happens in meals.

FUTURE IMPLICATIONS:

The method could possibly one day be applied directly to 3D printers. Giving engineers the ability to print intricate electronic designs that could be used in everyday electronics. However, this scientific development not only applies on earth. But also in space – the compounds and elements that are found in cement can also be found on the moon.

By applying the same method that we use on earth, we could possibly build large enough 3D printers that use this process to help build and establish a moon colony. If this method could be perfected, by 2023 when we hope to send people to Mars to establish a permanent Mars colony, this method could be one of the biggest economical driving forces of expanding the mars colonies without requiring substantial financial support from earth.

Not only could this method be used in the development of space colonies, but also transforming readily available resources into precious metals and other rare resources. This method in the years to come could change the way that we view global resources and our ability to access them. Opening and door to global development, and hopefully world peace.

Source: http://www.anl.gov/articles/formula-turning-cement-metal

Saturday, June 1, 2013

Methods for Futuring: Part 4 methods

-->

Please note: All content from Part 1, 3, and 4 was summarized from the book Futuring:The Exploration of the future by Edward Cornish

Futuring Methods

In

this section we will look at the broad strokes of the methods behind futuring in

eleven different methods.

·

Scanning

– Typically scanning is based on systematic survey of news articles, reviewing

academic journals for new developments in technology and innovations. Not only

is having an understanding of topographic of the developments of business and

technology, but also politics. Trend Monitoring

·

Trend

Analysis – Determine the rate of change of change in trends to identify its

nature, cause, speed of development, and potential impact. Careful analysis

maybe be needed though statistical models or with human imagination to give you

a theoretical idea about the future.

·

Tend

Monitoring – Watching reports regularly is key to creating informed decisions

about the future.

·

Trend

Projection – After trends have been analyzed in a numerical way, trends then

can be plotted on in a graphing machine to show change in the past and

theoretical changes in the future.

·

Scenarios

– The future development of trends, using different strategies such as Murphy’s

law to describe an outline for the future.

·

Polling

– Collecting people’s opinion through surveys, polls, or by face-to-face

interactions. The Delphi polling method is one of the most popular polling

methods among futurist.

·

Brainstorming

– Generating new ideas about the future to help give you an idea map about the

future. The same type of rough data that the early explorers would have used to

map their journey to a new land.

·

Modeling

– Gives us the ability to visualize data and project trends and project

forecast into the future.

·

Gaming

– The simulation of real-world situations by the means of humans playing

different roles and observing the by-product of these scenario-building games.

·

Historical

Analysis – The use of historical events to understand how to proceed into the

future.

·

Vision

– Using the systematic creation of visions of a desirable future or companies,

organization, or for individuals. Normally, this process starts with a

historical analysis.

By interlacing these topics together and using the perspective of the

supertrends and superforces and we reviewed in the pervious section, along with

what we’ve learned from the great explorers, we can start to develop a

futuristic model of the future, through the use of forecast and the creation of

scenarios. Please note: All content from Part 1, 3, and 4 was summarized from the book Futuring:The Exploration of the future by Edward Cornish

Subscribe to:

Comments (Atom)